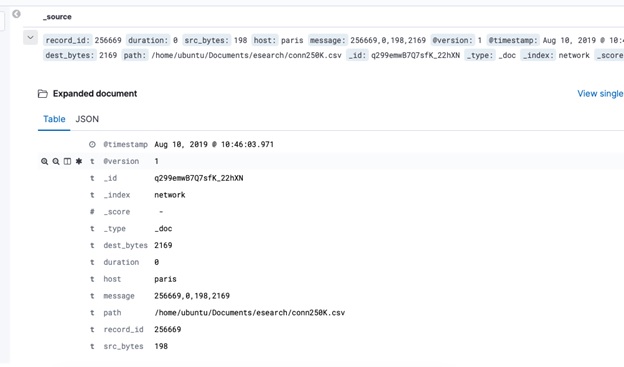

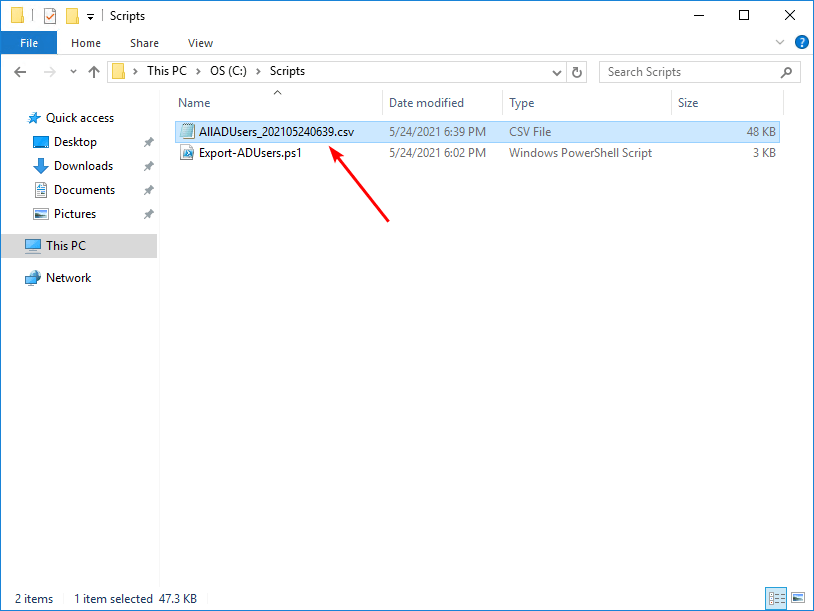

You would go to the Discover section, highlight the required fields, and have something like this. Let’s say you want to export some of the fields of the whole dataset to a CSV file. Let’s take the kibana_sample_data_ecommerce index as an example. connection through SSL, batch size for the extraction). number of processes to exploit when multiprocessing, max size of the partial CSV files created during the elaboration) and to the Elasticsearch instance (e.g. fields to export, time field to sort on), to the machine specs (e.g. It allows users to specify a bunch of parameters to perfectly tune the extraction according to their needs (e.g. The tool is intended for anybody who needs a relatively fast export of data stored in a Elasticsearch index and doesn’t want to get their hands dirty with code and technicalities.

If you read this article down to here, sneaking through the quips and quotations, you might already imagine the purpose of the tool (one of them, at least). This is how elasticsearch_tocsv came to light, or that’s how I remember it, at least. or someone should have anyway. Fact is, I heard it and acted accordingly.įabulous secret powers were revealed to me the day I held aloft my magic keyboard and said, “By the power of Python ! I have the Power!” After this enlightening experience, I figured I had to do something. I made a couple of adjustments, hardcoded something here and there, and got the job done in a couple of hours or so. Whatever it was, it was too slow.Įventually, after a day of failed tests, disappointing research and admittedly, tears, I decided to dust off a Python script I had written some months prior in to extract, manipulate and reindex some documents for another project. Something linked to how Logstash queues the fetched documents yet to process, or possible another internal mechanism. The continuous access to the disk tremendously slowed down the process. It took 2 hours to write less than 5 million documents, which means it would have taken 20 hours to extract everything. Actually, it seemed as if the more it continued to fetch documents, the slower it got. I pumped up the JVM a bit, increasing the memory to 6 GB and the batch size to 2000. I quickly wrote down a pipeline which took in input the Elasticsearch index and output a CSV file. When I got back on track I found that the report had been generated, but a not-so-reassuring “ Max size reached” write had been appended to the report name. Downloading it, I noticed only 160K documents had been exported. Some errors linked to the CircuitBreakingException (.) Data too large (.) were raised (to prevent some OutOfMemoryError possibly). What’s more, after 17 hours - assuming that none of the extractions would have timed out and failed, or succeeded at the second or third attempt, and assuming I had nothing better to do than setting up an alarm to manually start a new 500K-document export as soon as one finished - I still would have had to post-process all the resulting CSV files to stitch them together. So I tried increasing the, raising it from 2 minutes to 5 minutes and eventually 10 minutes, still keeping the amount of data capped at 500K. Even if it worked, it would have taken around 10 minutes to extract 1% of the total amount of data. That means almost 2 hours to extract 5 million documents and almost 17 hours to extract the requested amount of docs. I went down to 500K documents and still got a timeout error. I kept going with binary splitting, reducing the amount of documents from 50 million or so down to 25M, then 12M, 6M, 3M… and nothing. Then I tried reducing the time-span, in order to reduce the number of documents and maybe get by with 2 or 3 separate exports. Why? Because on my first attempt at extracting such a large amount of documents directly from the Discover, the disappointing result was an error due to an excessive number of timeouts. I’ll be in touch in half an hour at most”. Surprise, surprise, that half an hour became an hour, and then hours and finally a day. My brazen answer was: “Consider it done, boss. Recently though, I was asked for a CSV extraction in the order of tens of millions of documents. I’ve been using the stack for quite a while now, and every time I’ve needed a CSV report I’ve crawled back to that feature.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed